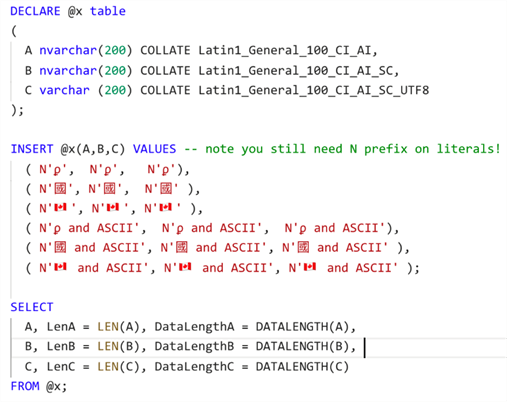

Characters are stored in WCHAR type (as opposed to char) which takes 16 bits (Fallsback on standard C type wchar_t, which takes 16 bits on Win32. Therefore, you can use sqlite3_open16() directly. When a VS project says "Unicode charset", it actually means "characters are encoded as UTF16". UTF8 and UTF16 are the most widely used encodings. Encodings say how the Unicode code points (numerical values) are represented in memory (binary layout of the number). You have a Unicode string represented in your program using a given encoding: Unicode is not a binary representation per se. You don't have a Unicode string that you want to convert to UTF8 or UTF16. Such as for a CString: (void*)(LPCWSTR)strFilename Seems lame! Even if this lib is cross platform, I guess they could have defined a wide char type that depends on the platform and is less unfriendly than a void *) to the API. You will have to make sure you pass a WCHAR pointer (casted to void *. If you find bugs or any improvements (especially in Variant 1) please tell me, I'll fix them.No conversion required if you use Unicode strings such as CString or wstring. Variant 1 was created out of Wikipedia description of UTF-8 and UTF-16 encodings. Only low-level functions Utf8To32, Utf32To8, Utf16To32, Utf32To16 are the only things that are really needed for conversion. Part of code is not very necessary, I mean UtfHelper-related structure and functions, they are just helper functions for conversion, mainly created to handle in cross-platform way std::wstring, because wchar_t is usually 32-bit on Linux and 16-bit on Windows. These things are not needed for conversion functions.

Inclusions of and and, also call to SetConsoleOutputCP(65001) and std::setlocale(LC_ALL, "en_US.UTF-8") are needed only for testing purposes to setup and output correctly to UTF-8 console. To use functions themselves you don't need this utf-8 saving and options.

This utf-8 saving and options are needed only if you put literal strings with non-ascii characters, like I did in my code for testing only purposes. You have to save both of my code snippets in file with UTF-8 encoding and provide options -finput-charset=UTF-8 -fexec-charset=UTF-8 to CLang/GCC, and options /utf-8 to MSVC. Also they compile in CLang/GCC/MSVC compilers (see "Try it online!" links down below) and tested to work in Windows/Linux OSes. See examples of these and other usages inside Test(cs) macro.īoth variants are C++11 compliant. Or you may just use my handy helper function UtfConv(std::string("abc")) for UTF-8 to UTF-16 or UtfConv(std::wstring(L"abc")) for UTF-16 to UTF-8, UtfConv actually can convert from any to any Utf-encoded string. To convert UTF-8 to UTF-16 just call Utf32To16(Utf8To32(str)) and to convert UTF-16 to UTF-8 call Utf32To8(Utf16To32(str)). If you don't like my code then you may use almost-single-header C++ library utfcpp, which should be very well tested by many customers. I implemented two variants of conversion between UTF-8 UTF-16 UTF-32, first variant fully implements all conversions from scratch, second uses standard std::codecvt and std::wstring_convert (these two classes are deprecated starting from C++17, but still exist, also guaranteed to exist in C++11/C++14).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed